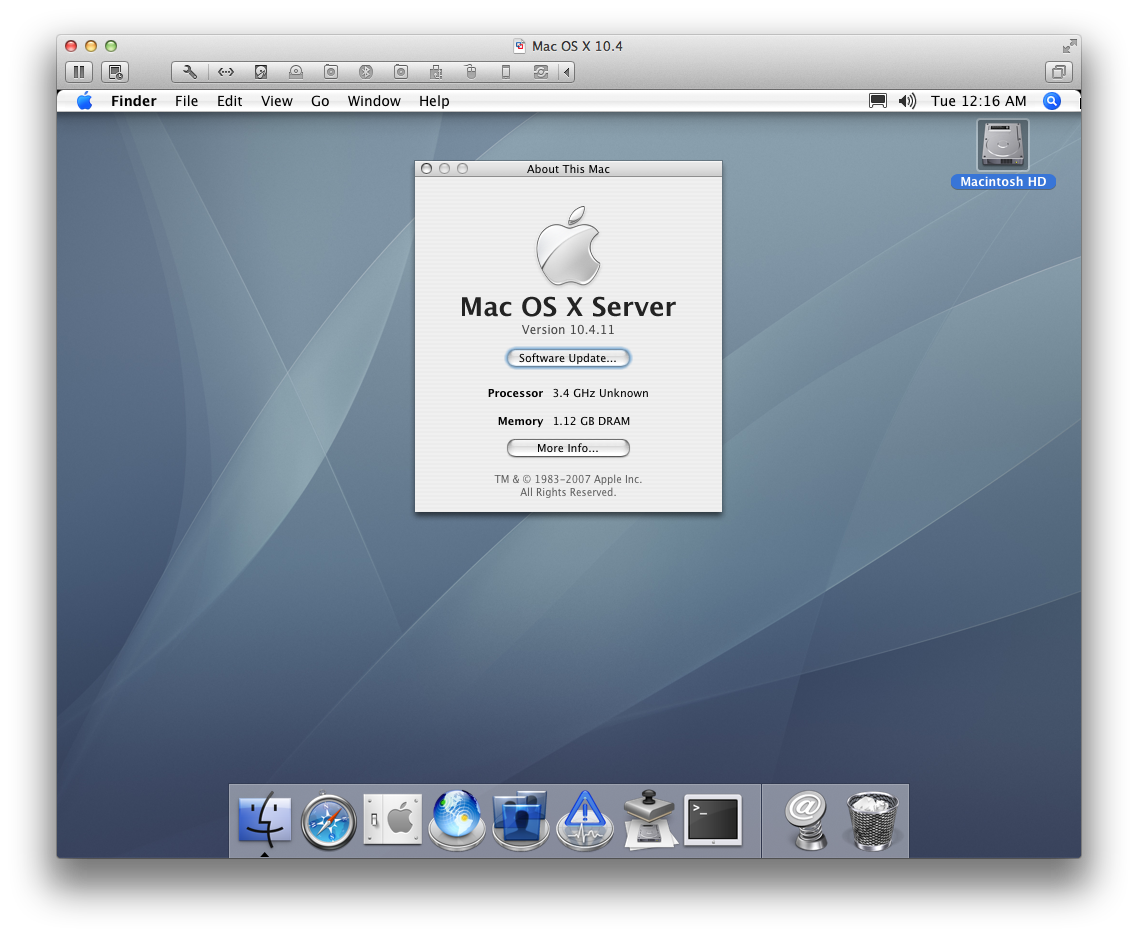

Mac OS X 10.4 under VMware Fusion on Modern CPUs

17 Dec 2013, 09:38 PSTRunning 10.4.11 under VMWare Fusion 6

Introduction

I recently agreed to port a small piece of code to Tiger (10.4), assuming that it would be relatively easy to bring up Tiger up in VMWare Fusion on my Mavericks desktop; I already keep VMs for all of the other Mac OS X releases starting from 10.6.

As it turns out, it wasn't so easy; after downloading a copy of 10.4 Server from the Apple Developer site, I found that the installer DVD would panic immediately on boot. Apparently Tiger uses uses the older CPUID[2] mechanism for fetching processor cache and TLB information (see xnu's set_intel_cache_info() and page 3-171 of the Intel® 64 and IA-32 Architectures Software Developer’s Manual, Volume 2).

Virtualized systems query the hosts' real CPUID data to acquire basic information on supported CPU futures; newer processors -- mine included -- do not include cache descriptor information in CPUID[2]. Unable to read CPUID[2], the Tiger kernel left the cache line size initialized to 0 in set_intel_cache_info(), and then panicked immediately thereafter when initializing the commpage and checking for (and not finding) a required 64 byte cache line size.

In theory, VMware Fusion has support for masking CPUID values for compatibility with the guest OS by editing the .vmx configuration by hand, but I wasn't able to get any cpuid mask values to actually apply, so I eventually settled for binary patching the Tiger kernel to force proper initialization of the cache_linesize value.

If you find yourself needing to run Tiger for whatever reason, I've packaged up my patch as a small command line tool. It successfully applies against the 10.4.7 and 10.4.11 kernels that I tried. While you should run it against /mach_kernel after mounting the Tiger file system under your host OS, I found that tool worked just fine just fine when run directly against my raw VMware disk image file, as well as against the Mac OS X Server 10.4.7 installation DMG.

Using the tool, I was able to install 10.4.7, upgrade to 10.4.11, and then patch the 10.4.11 VMDK to allow booting into the upgraded system.

Source Code

You can download the source here. Obviously, this is totally unsupported and could destroy all life as we know it, or just your Tiger VM; use at your own risk.

Keychain, CAs, and OpenSSL

14 May 2013, 09:39 PDTIt took me almost 13 years, but I finally sat down and solved a problem that has annoyed me since Mac OS X 10 Public Beta: synchronizing OpenSSL's trusted certificate authorities with Mac OS X's trusted certificate authorities.

The issue is simple enough; certificate authorities are used to verify the signatures on x509 certificates presented by servers when you connect over SSL/TLS. Mac OS X's SSL APIs have one set of certificates, OpenSSL has another set, and OpenSSL doesn't ship any by default.

The result of this certificate bifurcation:

- Any app using SSL (curl, svn, git) will fail to validate remote certificates unless you manually add all of the CA certificates to OpenSSL's certificate store.

- If you use a custom CA (eg, to sign certificates for your internal services), you'll need to add the CA certificate to OpenSSL's certificate store.

Given that computers can automate the mundane, I finally put aside some time to write certsync (permanent viewvc).

It's a simple, single-source-file utility that reads all trusted CAs via Mac OS X's keychain API, and then spits them out as an OpenSSL PEM file. If you pass it the -s flag, it will continue to run after the first export, automatically regenerating the output file when the system trust settings or certificate authorities are modified.

I wrote the utility for use in MacPorts; it's currently available as an experimental port. If you'd like to test it out, it can be installed via:

port -f deactivate curl-ca-bundle port install certsync port load certsync # If you want the CA certificates to be updated automatically

Note that forcibly deactivating ports is not generally a good idea, but you can easily restore your installation back to the expected configuration via:

port uninstall certsync port activate curl-ca-bundle

Or, you can build and run it manually:

clang -mmacosx-version-min=10.6 certsync.m -o certsync -framework Foundation -framework Security -framework CoreServices ./certsync -s -o /System/Library/OpenSSL/cert.pem

I also plan to investigate support for Java JKS truststore export (preferably before 2026).

iOS Function Patching

20 Jan 2013, 08:03 PSTOn Mac OS X, mach_override is used to implement runtime patching of functions. It essentially works by marking the executable page as writable, and actually inserting a new function prologue into the target function.

On iOS, pages are W^X: a page can be writable, or executable, but it's never allowed to be both. This has required finding inventive solutions, such as trampoline pools to support things such as imp_implementationWithBlock() and libffi's closures.

However, the trampoline approach will not work for patching arbitrary OS code; you somehow need to be able to modify the code in place, but there's no way to actually write to a code page.

Last night, Mike Ash and I were jokingly discussing how we could implement this (badly) using memory protections and signal handlers.

Enter libevil:

void (*orig_NSLog)(NSString *fmt, ...) = NULL;

void my_NSLog (NSString *fmt, ...) {

orig_NSLog(@"I'm in your computers, patching your strings ...");

NSString *newFmt = [NSString stringWithFormat: @"[PATCHED]: %@", fmt];

va_list ap;

va_start(ap, fmt);

NSLogv(newFmt, ap);

va_end(ap);

}

evil_init();

evil_override_ptr(NSLog, my_NSLog, (void **) &orig_NSLog);

NSLog(@"Print a string");

You should not use this code. Seriously.

How it Works

libevil uses VM memory protection and remapping tricks to allow for patching arbitrary functions on iOS/ARM. This is similar in function to mach_override, except that libevil has to work without the ability to write to executable pages.

This is achieved as follows:

- All mapped segments of the executable to be patched are remapped to a new address for preservation.

- The target page containing the function to be patched is set to PROT_NONE, triggering a crash if one attempts to execute anything in that page.

- A custom signal handler interprets the crash:

- If the IP of the crashed thread points at a patched function, thread state is rewritten to point at the new user-supplied function.

- If the IP of the crash thread points at some other address in the patched page, it is rewritten to execute from the mirrored copy of the binary.

- If the si_addr of the crash is within the patched page, all registers containing that address are rewritten to point at the mirrored copy of the binary.

The entire binary is remapped as to 'correctly' handle PC-relative addressing that would otherwise fail. There are still innumerable ways that this code can explode in your face. Remember how I said not to use it?

A fancier implementation would involve performing instruction interpretation from the crashed page, rather than letting the CPU execute from remapped pages. This would involve actually implementing an ARM emulator, which seems like drastic overkill for a massive hack.

The Code

The implementation only supports ARM, so you can only test it out on your iOS device. I've posted the code and a sample application on github.

Reliable Crash Reporting - v1.1

19 Jan 2013, 12:37 PSTA bit over a year ago, I wrote a blog post on Reliable Crash Reporting, documenting the complexity of reliably generating crash reports and how seemingly innocuous decisions could lead to failure in the crash reporter, or even corruption of user data. This was based on my experience in writing and maintaining PLCrashReporter, a standalone open-source crash reporting library that I've been maintaining (and using in our production applications) since around 2008.

Given that there have been a number of new entrants into the space, including KSCrash and Crashlytics 2.0, I thought it would be fun to revisit the previous post and review the current state of the art.

While I'd suggest reading the original post for the backstory on what makes reliable crash reporting difficult -- and why it matters -- I'll repeat the most pertitent section here:

Implementing a reliable and safe crash reporter on iOS presents a unique challenge: an application may not fork() additional processes, and all handling of the crash must occur in the crashed process. If this sounds like a difficult proposition to you, you're right. Consider the state of a crashed process: the crashed thread abruptly stopped executing, memory might have been corrupted, data structures may be partially updated, and locks may be held by a paused thread. It's within this hostile environment that the crash reporter must reliably produce an accurate crash report.

Today I'll touch on two reliability issues that remain in modern crash reporters -- handling stack overflows, and async-safety. The stack overflow issue is especially frustrating to me, given that it affects PLCrashReporter, too.

Async-Safety

Imagine that the application has just acquired a lock prior to crashing. If the crash reporter attempts to acquire the same lock, it will wait forever: the crashed thread is no longer running, and it will never release the lock. When a deadlock like this occurs on iOS, the application will appear unresponsive for 20+ seconds until the system watchdog terminates the process, or the user force quits the application, and no valid crash report will be written.

In my previous post, I touched on async-safety issues around Objective-C and re-entrantly running the user's code. Most crash reporters have moved away from those APIs, but have introduced new async-safety issues in the process.

One of the common issues I found in all new reporters was reliance on the pthread(3) API to fetch thread information, including thread names. These APIs are not async-safe, however, and will acquire a global lock in most cases -- including when fetching a thread's name via pthread_getname_np(3). The result is that if your code crashes while any thread is holding the pthread thread-list lock, the entire crash reporter will deadlock. Since the crash reporters suspend all threads during reporting, this can occur even if the pthread calls themselves do not crash, but rather, a thread just happened to be executing a pthread() call at the time a crash occured.

I put together the following test case to demonstrate this issue. It will cause crash reporters that make use of pthreads to deadlock: either until the user force-quits, or the iOS watchdog kills the process (after 20 or so seconds.)

#import <pthread.h>

static void unsafe_signal_handler (int signo) {

/* Try to fetch thread names with the pthread API */

char name[512];

NSLog(@"Trying to use the pthread API from a signal handler. Is a deadlock coming?");

pthread_getname_np(pthread_self(), name, sizeof(name));

// We'll never reach this point. The process will stop here until the OS watchdog

// kills it in 20+ seconds, or the user force quits it. No crash report (or a partial corrupt

// one) will be written.

NSLog(@"We'll never reach this point.");

exit(1);

}

static void *enable_threading (void *ctx) {

return NULL;

}

int main(int argc, char *argv[]) {

/* Remove this line to test your own crash reporter */

signal(SIGSEGV, unsafe_signal_handler);

/* We have to use pthread_create() to enable locking in malloc/pthreads/etc -- this

* would happen by default in any real application, as the standard frameworks

* (such as dispatch) will trigger similar calls into the pthread APIs. */

pthread_t thr;

pthread_create(&thr, NULL, enable_threading, NULL);

/* This is the actual code that triggers a reproducible deadlock; include this

* in your own app to test a different crash reporter's behavior.

*

* While this is a simple test case to reliably trigger a deadlock, it's not necessary

* to crash inside of a pthread call to trigger this bug. Any thread sitting inside of

* pthread() at the time a crash occurs would trigger the same deadlock. */

pthread_getname_np(pthread_self(), (char *)0x1, 1);

return 0;

}

This is the primary reason PLCrashReporter does not provide thread names in its crash reports; this requires either calling non-async-safe API, or directly accessing system-private structures that are often changed release-to-release. If there's significant user demand, I may consider adding optional support for fetching thread names by poking around in system-private structures.

Stack Overflow

When a thread's stack overflows, there is is no stack space left over for a signal handler to use, which results in the inability to record the crash.

This can be handled partially with sigaltstack(2), which instructs the kernel to insert an alternative stack for use by the signal handler. This is functional but imperfect, as the API requires registering a custom signal stack for every thread in the process. Despite the sigaltstack(2) man page's implication that the registered stack is process-global, the stack is only enabled for the thread calling sigaltstack(2). The result is that stack overflows can only be handled on the main thread, unless additional threads are manually registered.

On Mac OS X, we can make use of a more capable API -- Mach exception handling -- to fully solve this problem. Since Mach exceptions are handled on a dedicated thread (or out of process entirely), the crashed thread's stack is entirely independent of the crash reporter. Unfortunately, the requisite Mach definitions are private on iOS, and have been since I originally wrote PLCrashReporter. This issue previously arose when Unity had their user's apps rejected in 2009, due to Mono's direct use of the Mach exc_server() API, and they were forced to release an update that avoided the use of the API in question.

Given that the structures and definitions required for a full implementation of Mach exception handling are private (at least, insofar as I've been able to determine), PLCrashReporter has long relied on sigaltstack(2) to provide the ability to report crashes on the main thread.

Unfortunately, sigaltstack(3) is broken in later iOS releases. In fact, it simply doesn't do anything at all. I've filed rdar://13002712 (SA_ONSTACK/sigaltstack() ignored on iOS) to report the issue to Apple, but in the meantime, I can see no way to detect stack overflow on iOS using only public API.

I've implemented Mach exception handling in PLCrashReporter for Mac OS X, and it could be used as a work-around on iOS, but I'm uncomfortable with providing something that relies on undocumented and/or private SPI. To make sure I wasn't missing something obvious, I even reviewed the KSCrash and Crashlytics 2.0 implementations to determine how they work around this issue, since both use Mach exceptions. Unfortunately, KSCrash appears to have copied in the private structure definitions from the kernel source, and from what I can tell from disassembling their code, Crashlytics copied the (private on iOS) Mach headers from the Mac or Simulator SDKs.

To confirm, I contacted Apple DTS. Their reply was as follows:

Our engineers have reviewed your request and have determined that this would be best handled as a bug report, which you have already filed. There is no documented way of accomplishing this, nor is there a workaround possible.

This is a frustrating position to be in; it seems the only choices are either to leave stack overflow reporting broken, or make use of seemingly private API. I've filed a radar requesting that the requisite Mach defs/headers be made public. In the mean time, I'm considering providing iOS Mach exception support as a user-configurable feature. At the very least, it could be enabled only for development builds.

Conclusion

Crash reporting is a complex enough topic that you can be reasonably assured that 1) You will always get something wrong, and 2) there is always room to improve. There are always trade-offs and edge-cases in engineering, and especially so in crash reporting, in which one operates in an environment with significant reliability restrictions, coupled with the ability to fetch, update, and permute memory and thread state at will.

When it comes to implementing something complex like crash reporting correctly, projects like Google's Breakpad deserve considerable admiration. They've invested years of very smart people's time towards getting crash reporting right, and have been deployed on a huge number of desktops via Chrome and Firefox. I'm working to incorporate some of the solid design decisions that have gone into Breakpad -- such as placing guard pages around (or locking outright) memory that is required for function after a crash.

Going forward, I'll probably be writing more informal (and shorter) posts to explore particular aspects of crash reporting. If you have any questions, or have anything you think would be worth covering here, feel free to drop me a line.